Next.js from scratch in under a week

Last week, a single engineer at Cloudflare rebuilt the Next.js framework from scratch in one week. The result, Vinext, is a Vite-based drop-in replacement for Next.js. It builds production apps up to 4x faster and produces client bundles up to 57% smaller. The whole thing cost about $1,100 in tokens.

Yes, the latest generation of frontier models were largely responsible for this being possible - but it wouldn’t have happened if Next.js didn’t have a comprehensive Playwright end-to-end test suite.

The end-to-end tests are the specification of the Next.js framework. They describe how features are meant to work in great detail, baking in details and regressions built up over years of learnings. End-to-end tests also care very little if your underlying implementation uses Turbopack or Vite.

Enough automatic iterations on the test suite and they had a decent slop fork!

Your tests matter more than your implementation

Implementations have never been this cheap. But the right implementation still has a lot of value. Therefore the thing that can tell you if the implementation you have is right, also becomes very valuable.

“Heavier” tests like integration and end-to-end tests can to a very high degree describe what software should do (to a higher degree than written language). They can be the descriptors of what is right.

This is why our tests suddenly hold more value than our implementations.

I’m not saying that implementations are free. Architecture and system design still matter enormously. Deciding the shapes of data structures and communication boundaries between systems needs a lot of active thought. But the line-by-line implementation of a function has never mattered less - as long as it passes the tests.

Writing from scratch vs test tautology

This heavier emphasis on the value of tests has changed the way I write and review code. I feel a much stronger need to review the layouts and assertions of newly created test suites.

I spend most of my time thinking about and writing types, interfaces and tests from scratch. Once I feel like these pieces are in place, the implementation comes easily and I feel confident that it does what it’s meant to.

If I don’t put down the effort upfront to describe my understanding of the domain through types and tests, I run a very high risk of getting a meaningless passing test suite.

AIs are extremely eager to please, and if you’ve only loosely defined the meaning of your domain, you’ll only get a loosely passing test suite. I’m sure many of you have seen a slightly too large AI-generated unit test suite that certainly has many assertions, but few of which mean anything.

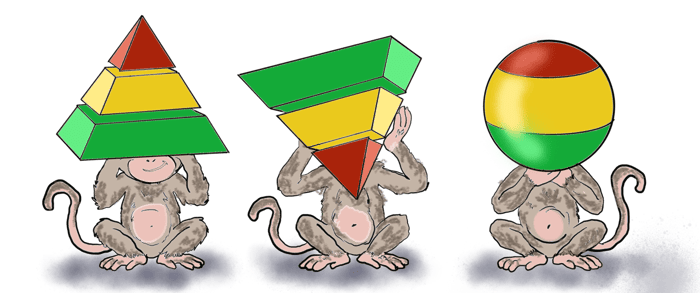

Relative value of test kinds

The testing pyramid has long served as a loose foundation of how to design a test suite. Use lots of lightweight unit tests, and less heavier integration and end-to-end tests. The trade-off is very straightforward. Make sure that you can test your system with enough depth without making your suite too slow or costly.

I think it’s worth reconsidering this kind of model while the way we build software changes.

At the top end of the pyramid, the value of integration and end-to-end tests has changed. Why not write and maintain more of them?

At the bottom end of the pyramid, the implementation matters less. How extensive should unit test suites be?

Meta recently published a paper on “Just-in-Time Tests”. The idea is to temporarily expand the size of unit test suites using AI in order to help surface bugs in code changes before they land. These temporarily added unit tests are not stored in the codebase and discarded after they’ve been used. By doing this they could catch more regressions and help to reduce the human review burden.

Since unit tests are more specific to an implementation, it could make sense to use them extensively, but only as short-term artifacts rather than long-term domain specifications.

Should I be worried?

If your tests are suddenly so valuable, should you be protecting them?

In a hotly debated GitHub issue in the TLDraw repository, TLDraw’s founder Steve jokingly suggested to move their tests to a closed source repository to reduce the risk of slop forks. A “slop fork” being an AI generated clone bootstrapped from an existing test suite.

Steve’s response explains much better than I can how tests are valuable but there’s a lot more to it:

- Speed is more important, closed source tests would be a huge pain

- Taste / good product design / serious maintenance will be more of a deciding factor to your success

Looking forward

Have another think about the new value your tests have as a tool for software creation.

If you start viewing them as longer term artifacts that can help you both with your current and future implementations, it might motivate you to spend more time on them up front.

- Take the design of your tests and the assertions that they contain seriously.

- Don’t just blindly accept the pre-written tests from your AI that are guaranteed to pass and guaranteed to mean less.

- Find a blend of test kinds that work for your project. It’s always better to have several types of tests rather than many of a single kind.

Most importantly, don’t tolerate slop, and have fun!